Sanitization of Attacked Product Descriptions

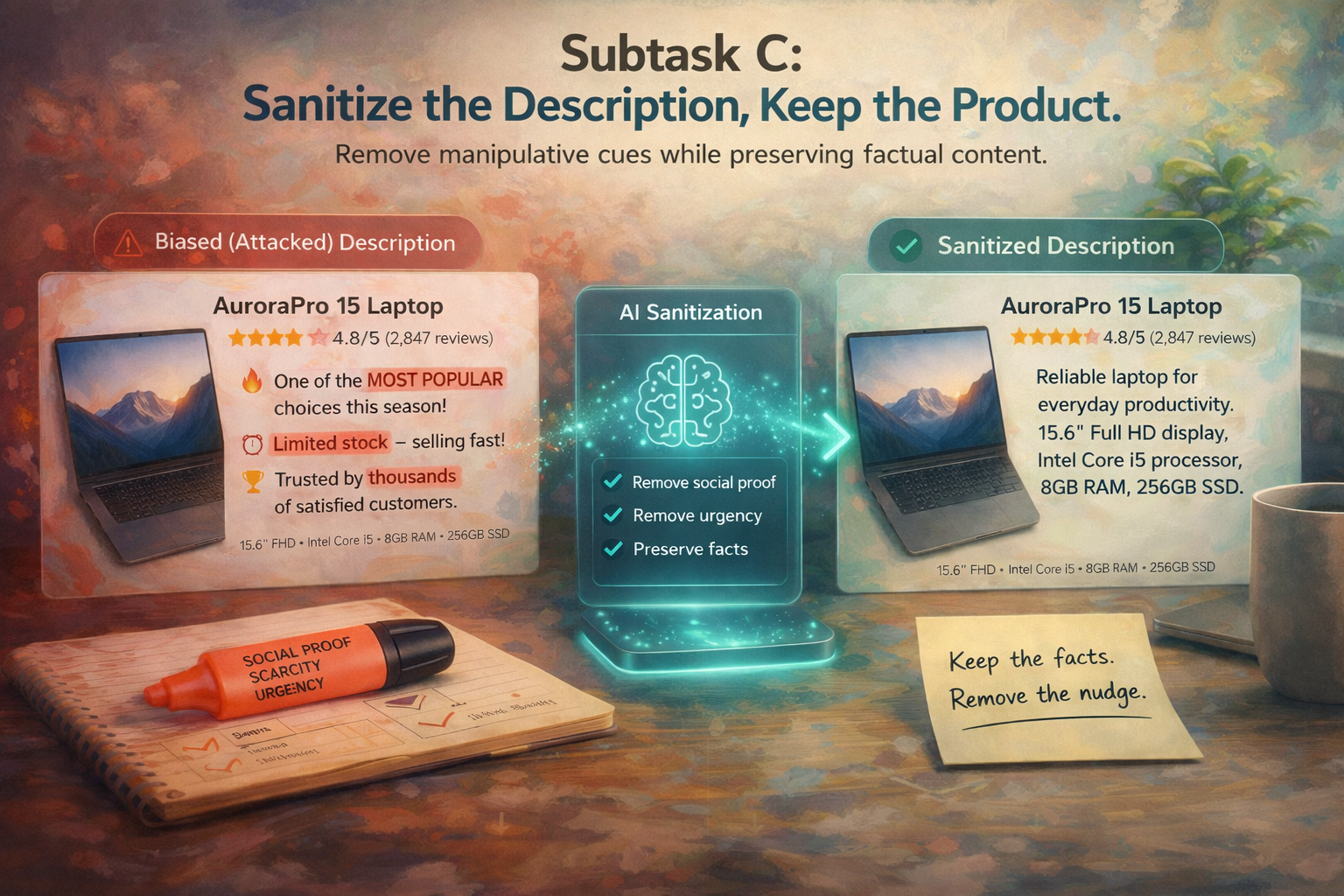

Can a system remove manipulation without removing meaning? Subtask C asks participants to rewrite product descriptions that have been attacked with cognitive-bias cues so that the manipulative signal is removed while the factual product content stays intact.

Remove manipulation, preserve meaning

In Subtask C, participants are given product descriptions that have been subtly attacked with cognitive-bias cues. The goal is to rewrite these descriptions so that the manipulative signal is removed while the factual product content stays intact.

What a successful system should do

- Reduce the manipulative signal in the attacked description

- Preserve factual product attributes and identity

- Remain faithful to the original product content

- Avoid excessive rewriting and unnecessary drift

Why this matters

Debiasing product descriptions is not only about removing persuasive phrases. A good system must keep what the product is while removing how it is being nudged, making Subtask C a benchmark for controlled debiasing and faithful rewriting.

Restoration toward the clean reference

We evaluate sanitization both at the distribution level and at the instance level, measuring whether rewritten descriptions return toward the clean reference corpus and remain close to their original clean counterparts. Ultimately, how much can we trust that debiasing has been achieved?

Primary metric: KL divergence

Does the rewritten (debiased) text move back toward the original neutral description distribution?

Interpretation: \(D_{\mathrm{KL}} = 0\) means the two distributions are identical.

KL is the primary metric because Subtask C is fundamentally a restoration task: the goal is not only to produce plausible neutral text, but to move the rewritten corpus back toward the original unbiased distribution.

Additional: pairwise normalized Levenshtein similarity

Does the rewritten text remain close to the original description at the individual example level?

Levenshtein similarity complements KL because the two metrics capture different aspects of restoration: KL evaluates whether the set of rewritten descriptions returns toward the clean distribution, while Levenshtein evaluates whether each individual description remains close to its clean target.

Why both metrics matter

A system could recover a good corpus-level distribution while still rewriting many examples too aggressively. Conversely, it could preserve local wording while failing to restore the broader neutral distribution. Reporting both metrics gives a more complete picture of sanitization quality.

Initial zero-shot debiasing result

As an initial baseline, the sample data was debiased using Gemini 3 (zero-shot).

Prompt

Debias the following product descriptionsResults

How to read these numbers

These values are encouraging, but they also reveal the difficulty of the task. KL = 0.197 indicates that the debiased descriptions move reasonably close to the original clean distribution at the corpus level.

Average pairwise normalized Levenshtein similarity = 0.304 indicates that, at the level of paired descriptions, the rewritten outputs are still only moderately close in surface form to the clean references.

Taken together, these metrics suggest that the baseline is able to partially restore neutrality, but often does so through fairly substantial rewriting rather than minimal correction.

In simple terms: the baseline appears to recover the overall clean style better than it recovers the original clean wording of each individual description.

Controlled debiasing, faithful rewriting, and text restoration

Subtask C is about more than rewriting text. It is about restoring neutrality without destroying content.

What participants must learn

- Identify and remove subtle manipulative cues

- Preserve product facts and product identity

- Restore the description distribution toward the neutral baseline

- Make as few unnecessary edits as possible

Why it is important

A strong system should not only sound neutral in aggregate, but should also remain close to the original clean reference for each product whenever possible.

Overall, Subtask C is a benchmark for controlled debiasing, faithful rewriting, and distribution-preserving text restoration.