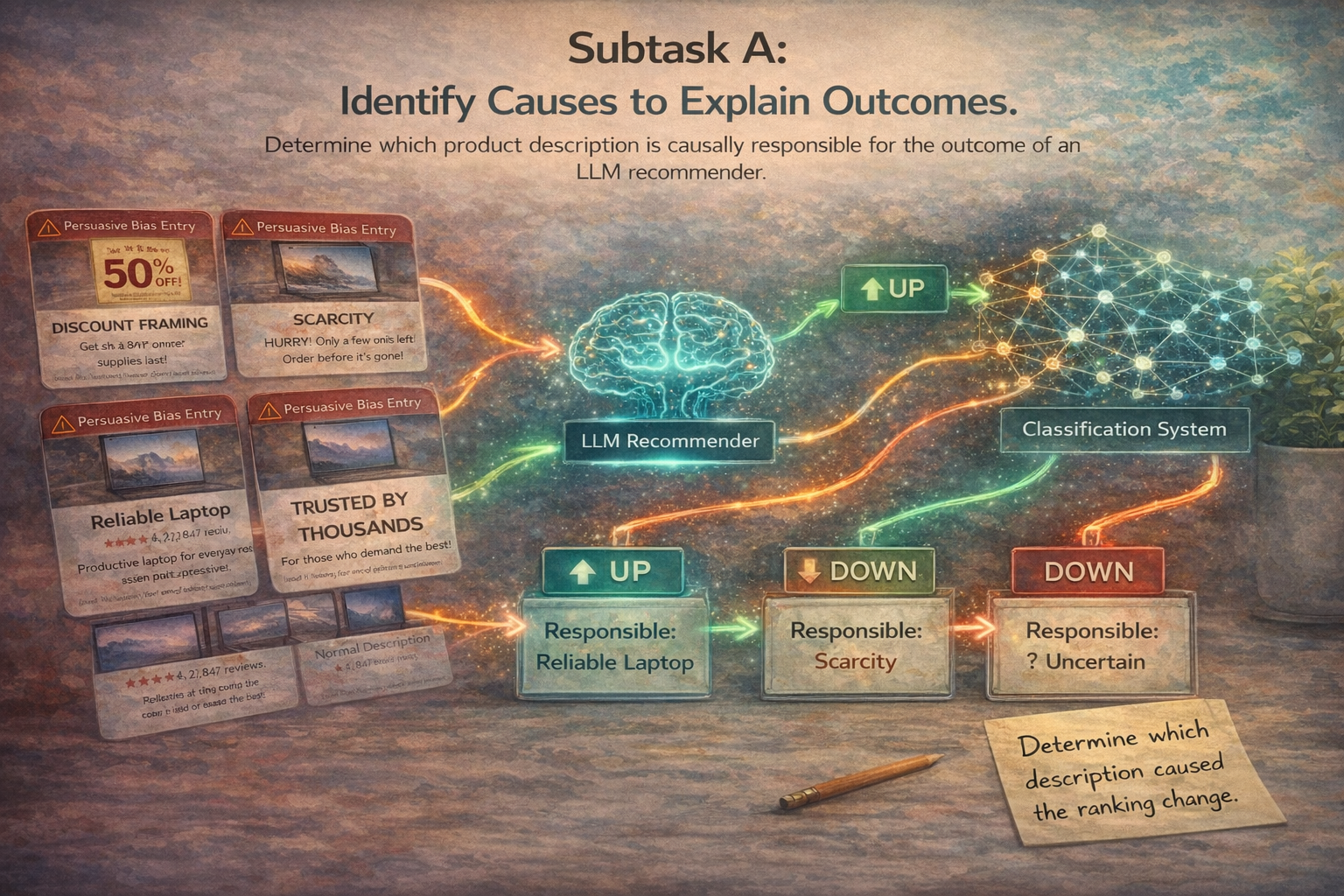

Causal Attribution

Can a system identify which biased product is causally connected to a recommendation outcome? Subtask A asks participants to reason about downstream effects and decide whether Description A, Description B, or neither can best explain the observed shift.

Identify the manipulated description behind the outcome

Participants are given pairs of attacked product descriptions, denoted Description A and Description B. The task is to determine whether the observed recommendation effect is better attributed to A, B, or Uncertain. In this setting, the recommendation effect refers to the change in a product’s ranking position before and after the attack. Each attacked product is therefore associated with one of three movement labels: Up, Down, or Same, indicating whether the product moved higher, lower, or remained at the same position after the cognitive-bias manipulation.

Prediction space

- A — description A is the better causal explanation

- B — description B is the better causal explanation

- Uncertain — the effect cannot be confidently attributed to one side

Why it is difficult

This is not a bias-detection task. Systems must reason about which manipulated input is most likely connected to the downstream recommendation change, making the problem substantially harder than simply recognizing persuasive language.

How the pilot data is built

The pilot begins from a clean control ranking and several attack-specific recommendation settings. Using ChatGPT 5.4 as the recommender, attacked products are compared against their positions in the control ranking and assigned one movement label: Up, Down, or Same.

Per attacked product

- attacked product description

- cognitive bias type

- movement label: Up, Down, or Same

Final pilot instance

If the movement labels differ, one side is randomly selected as the gold causal source. If the movement labels are the same, the gold label is Uncertain.

100 paired examples

For the pilot study, we sampled 100 paired examples.

Model roles

- ChatGPT 5.4 — recommender

- Gemini 3 — causal-attribution model

Expected output

Gemini 3 receives a pair of attacked descriptions and must output exactly one label: A, B, or Uncertain.

Multi-class causal classification

Predictions are evaluated against the gold labels using standard multi-class classification metrics.

Metrics

- Primary metric: Macro-F1

- Additional metrics: Accuracy, Balanced Accuracy, Per-class Precision / Recall / F1, Confusion Matrix

- Also reported: one-vs-rest ROC/AUC values for completeness

Per-class performance

Class A

- Precision: 0.167

- Recall: 0.316

- F1: 0.218

Class B

- Precision: 0.167

- Recall: 0.273

- F1: 0.207

Class Uncertain

- Precision: 0.100

- Recall: 0.021

- F1: 0.034

Confusion matrix

| pred_A | pred_B | pred_Uncertain | |

|---|---|---|---|

| true_A | 6 | 13 | 0 |

| true_B | 15 | 9 | 9 |

| true_Uncertain | 15 | 32 | 1 |

One-vs-rest ROC AUC

- A: 0.473

- B: 0.301

- Uncertain: 0.424

- Macro OVR AUC: 0.399

- Weighted OVR AUC: 0.392

Why these results matter

The pilot shows that causal attribution is substantially harder than bias identification. Humans can easily recognize explicit bias cues, but deciding which manipulated product is responsible for a downstream ranking effect is a much more demanding reasoning problem.

Key observations

- Gemini 3 performs well below a strong reliability threshold

- It does somewhat better on A and B than on Uncertain

- The model tends to over-commit instead of abstaining in ambiguous cases

- Classwise analysis is essential; overall accuracy alone is misleading

Pilot takeaway

The pilot confirms that Subtask A is non-trivial and meaningful. It is not solved by superficial pattern matching and supports BiasBeware as a benchmark for causal reasoning under biased language conditions.